The race to automate machine learning is heating up, and OpenAI just dropped PaperBench, a new benchmark designed to see if AI can truly replicate cutting-edge ML research.

PaperBench tests whether AI agents can read research papers, write the code, and then run experiments to reproduce the results. Comprising 20 papers from ICML 2024, the trials span reinforcement learning, robustness, and probabilistic methods and feature detailed rubrics specifying 8,316 individually gradable tasks.

Technically, the agents have to build everything from scratch, processing the paper plus any clarifications to create a complete, executable code repository, including the critical reproduce.sh file. To ensure a fair test, they can’t borrow any code from the original authors. Evaluation is handled by SimpleJudge, an automated large language model, which itself scored an F1 of 0.83 on the JudgeEval validation dataset.

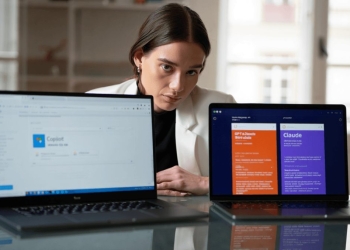

So, how did the models fare? Claude 3.5 Sonnet topped the charts with an average replication score of 21.0%. OpenAI’s GPT-4o lagged behind at 4.1%, and Gemini 2.0 Flash trailed further at 3.2%. For context, human ML experts hit 41.4% after 48 hours, showing there’s still a significant gap.

The analysis revealed that AI shines initially with rapid code generation and experimental setup but fades over time, struggling with sustained tasks, troubleshooting, and strategic adjustments. For broader use, OpenAI is also offering PaperBench Code-Dev, which focuses on code correctness without requiring full experimental runs, reducing costs.

OpenAI’s open-sourcing of PaperBench should drive further research into autonomous AI capabilities. The benchmark offers a detailed environment for assessing AI and understanding the strengths and limitations of AI models relative to human abilities.